Twenty first century human being has become a skillful, talented and

wonderful innovator. Therefore the contemporary world is full of

advanced technology. Almost all the production lines, processing

procedures, manufacturing techniques have been automated.

That means human work have been made easy. What is the main cause? It

is the invasion of the COMPUTER. Its influence has more advantages than

disadvantages. There are the technical frauds happening in connection

with Computer Technology, but we cannot say, exactly computer is the

culprit.

Nevertheless, from this article I’m presenting some simple facts

about computers and its functions as it is useful to you as we all deal

with computers in our day-to-day life. Nevertheless, from this article I’m presenting some simple facts

about computers and its functions as it is useful to you as we all deal

with computers in our day-to-day life.

What is a Computer?

A computer is a machine that manipulates data according to a list of

instructions.

Machine - A `machine’ is any given device that uses energy to perform

some activity.

`Data’ - In computer science, `data’ is anything in a form suitable

for use with a computer. Data is often distinguished from programs. A

program is a set of instructions that detail a task for the computer to

perform. In this sense, data is thus everything that is not programmed.

Code Instructions - Advices/steps given to a computer to fulfill a

specific task.

Although mechanical examples of computers have existed throughout the

history, the first modern computer was developed in the mid-20th century

(1940).

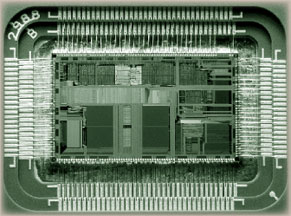

The first electronic computer was the size of a large room, consuming

as much power as several hundred modern personal computers (PC. Modern

computers based on tiny integrated circuits are millions to billions of

times more capable than the early machines, and occupy a fraction of the

space.

Simple computers are small enough to fit into a wristwatch, and can

be powered by a watch battery.

Personal computers in their various forms are icons of the

Information Age, what most people think of as a computer”, but the

embedded computers found in devices ranging from fighter aircraft to

industrial robots, digital cameras, and toys are the most numerous.

The ability to store and execute lists of instructions called

programs makes computers extremely versatile, distinguishing them from

calculators.

A Programs is Methodical set of Instructions given to a computer to

do specific tasks.

History of computing

The first use of the word “computer” was recorded in 1613, referring

to a person who carried out calculations, or computations, and the word

continued to be used in that sense until the middle of the 20th century.

From the end of the 19th century onwards though, the word began to take

on its more familiar meaning, describing a machine that carries out

computations.

The history of the modern computer begins with two separate

technologies automated calculation and programmability but no single

device was identified as the earliest computer, partly because of the

inconsistent application of that term.

Examples of early mechanical calculating devices include the

`abacus’, the `slide rule’ and arguably the `astrolabe’ and the `Antikythera’

`mechanism’ (which dates from about 150-100 BC). Hero of Alexandria (c.

10-70 AD) built a mechanical theater which performed a play lasting 10

minutes and was operated by a complex system of ropes and drums that was

considered to be a deciding factor of the mechanism performed which

actions. This is the essence of programmability.

The `castle clock’, an astronomical clock invented by Al-Jazari in

1206, is considered to be `the earliest programmable analog computer.’

It displayed the zodiac, the solar and lunar orbits, a crescent

moon-shaped pointer travelling across a gateway causing automatic doors

to open every hour, and five robotic musicians played music when struck

by levers operated by a camshaft attached to a water wheel. The length

of day and night could be re-programmed to compensate for the changing

lengths of day and night throughout the year.

The end of the Middle Ages saw a re-invigoration of European

mathematics and engineering. Wilhelm Schickard’s 1623 device was the

first of a number of mechanical calculators constructed by European

engineers, but none fit the modern definition of a computer, because

they could not be programmed.

All modern computers implement some form of stored-program

architecture, making it the single trait by which the word “computer” is

now defined.

While the technologies used in computers have changed dramatically

since the first electronic, general-purpose computers of the 1940s, most

still use the von Neumann architecture. While the technologies used in computers have changed dramatically

since the first electronic, general-purpose computers of the 1940s, most

still use the von Neumann architecture.

Microprocessors are miniaturized devices that often implement stored

program CPUs. Computers using vacuum tubes as their electronic elements

were in use throughout the 1950s, but by the 1960s had been largely

replaced by transistor-based machines, which were smaller, faster, and

cheaper to produce, required less power, and were more reliable.

The first transistorized computer was demonstrated at the University

of Manchester in 1953.

In 1970s, integrated circuit technology and the subsequent creation

of microprocessors, such as the `Intel 4004’, further decreased the size

and cost and further increased speed and reliability of computers. By

the 1980s, computers became sufficiently small and cheap to replace

simple mechanical controls in domestic appliances such as washing

machines. The 1980s also witnessed home computers and the now ubiquitous

personal computer.

With the evolution of the Internet, personal computers are becoming

as common as the television and the telephone in the household. Modern

Smartphone is fully-programmable computers in their own right, and as of

2009 may well be the most common form of such computers in existence.

- `Tharindu Weerasinghe’

|